Background

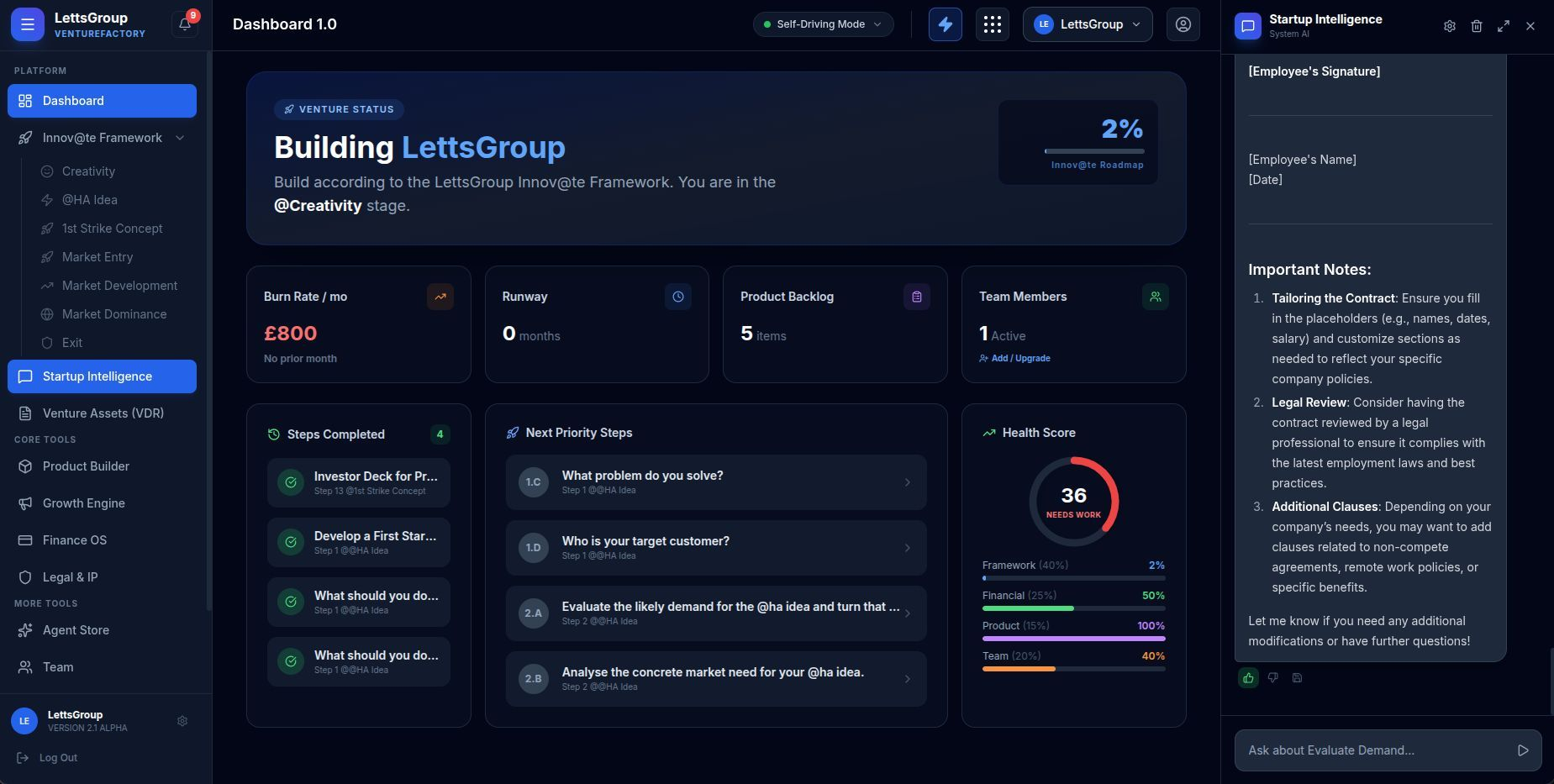

AI VentureFactory didn’t start as a dashboard. It started as an operating system — a radically different idea about how venture creation should work in a world where AI can handle the scaffolding, the planning, and much of the execution overhead that used to eat founders alive.

LettsGroup set out to compress the full arc of startup building — from raw idea to investor-ready venture — into a single, guided, software-driven platform. Not another AI assistant. Not a glorified to-do list with GPT bolted on. A genuine operating system for how startups get built: structured methodology, multi-model AI, agentic workflows, and venture operating tools in one coherent product.

Poplab joined as design partner from the earliest stages of the platform’s UX and product design, with a mandate to make this level of ambition navigable.

Challenge

The hardest design challenge wasn’t any single screen. It was scope.

AI VentureFactory spans 14 feature categories — an Agent Store, a Virtual Data Room, Finance OS, Growth Engine, Product Builder, Legal & IP Shield, Agent Chains, an MCP server layer, team collaboration, and plan-gated self-driving execution modes. Each of those is a product in itself. Together, they had to feel like one.

- Make a platform with 100+ AI-powered tools feel focused, not sprawling.

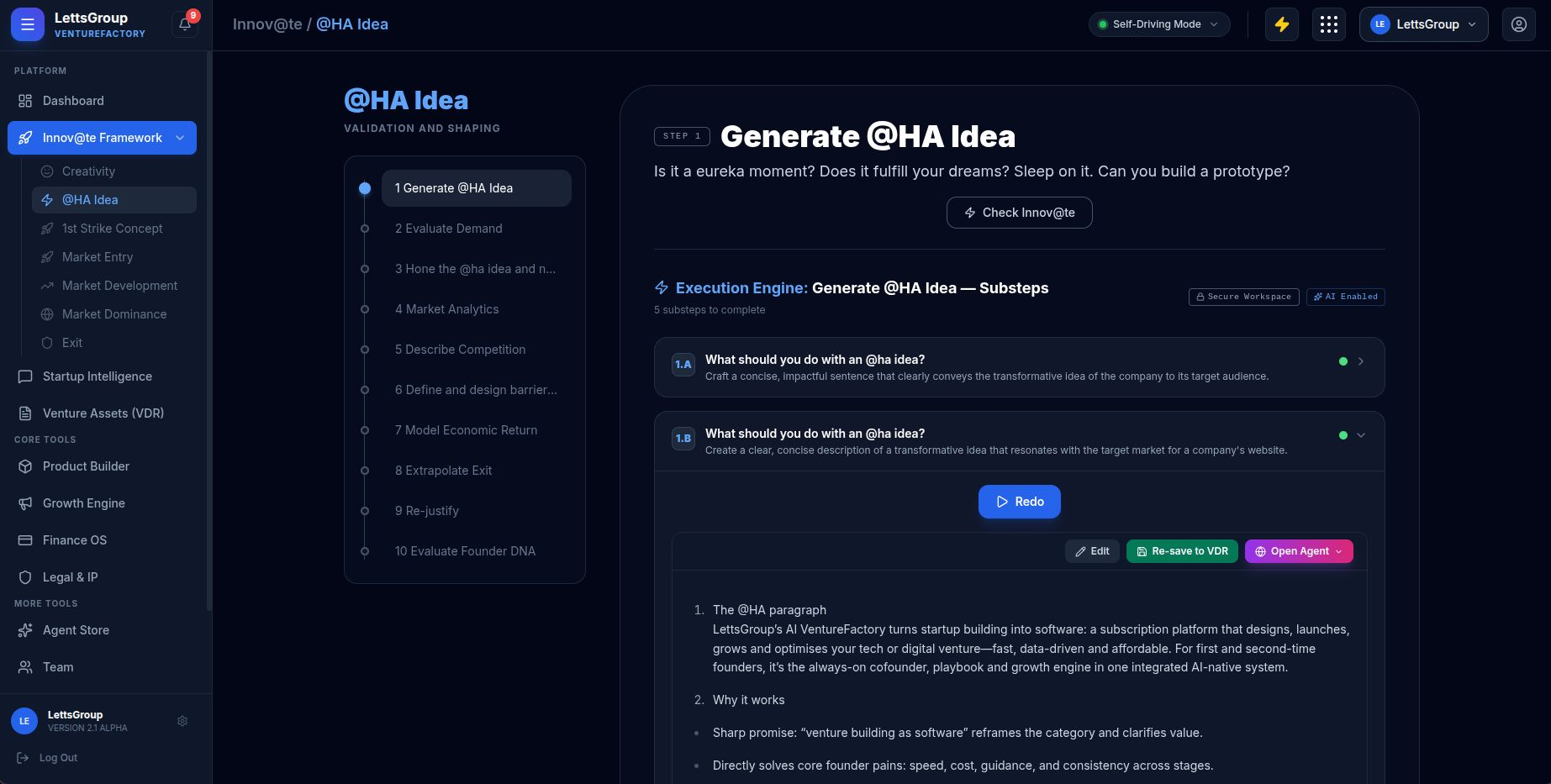

- Design for founders who are simultaneously first-time users and domain experts — people who know what a VDR is but have never seen one inside a venture-building workflow.

- Build trust for an AI that doesn’t just suggest but acts — agentic workflows that modify venture data require a UX that communicates control, not chaos.

- Design plan-gated features without creating a constant friction wall of upgrade prompts.

- Balance the deep structure of a methodology-driven platform with the immediacy that modern founders expect.

Layered on top were the technical realities of multi-model orchestration: latency, confidence scoring, and error states that users had never seen before in a productivity tool. Designing the language and patterns for AI uncertainty was as much a part of this project as the layouts.

Process

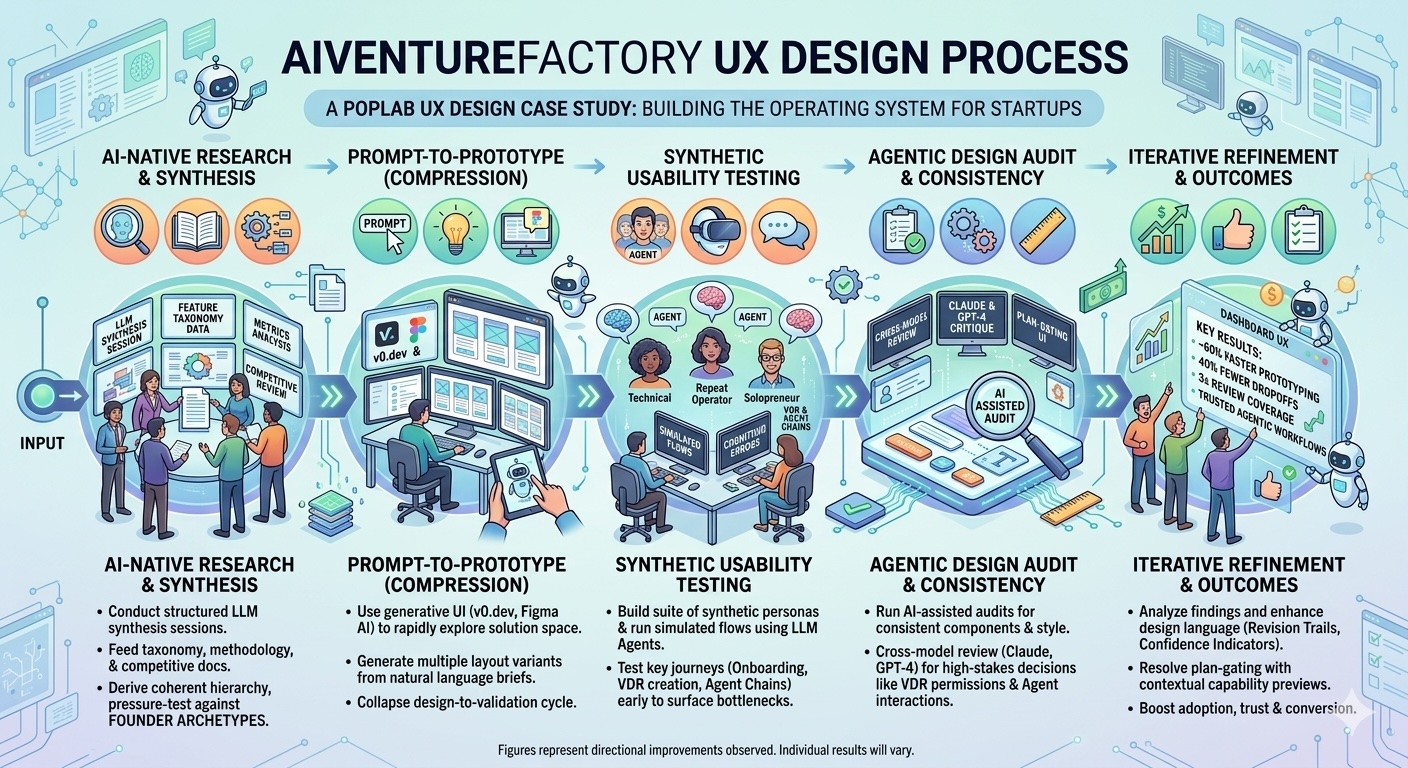

We built AI VentureFactory the Poplab way: design-led, systems-forward, and prototype-heavy — but this project pushed that methodology into new territory, leaning fully into the latest generation of AI-native design workflows.

We didn’t card-sort our way to the information architecture. We ran structured LLM synthesis sessions — feeding the full feature taxonomy, LettsGroup’s methodology documents, and competitive venture-building platforms into multi-model review loops to derive a coherent hierarchy from the ground up. The AI surfaced groupings and mental model conflicts that would have taken weeks of stakeholder workshops. We used those outputs as raw material, then pressure-tested against founder archetypes before committing to structure.

“AI-powered prototyping collapsed our design-to-validation cycle by over 60% — we were testing with real founders before most teams would have finalised their wireframes.”

First-pass layouts were generated via v0.dev and Figma, driven by natural language briefs tied directly to each workflow. Rather than sketching wireframes from scratch, we used generative UI to explore the solution space rapidly — sometimes running 8–12 layout variants for a single screen in the time it used to take to produce one. This wasn’t automation replacing craft; it was compression freeing up craft for decisions that actually matter.

We built a suite of synthetic founder personas — first-time technical founders, repeat operators, solo bootstrappers — and ran simulated usability flows using LLM agents before a single real user touched the product. Key journeys tested: first-run onboarding, VDR document creation, Agent Chain setup, and plan upgrade moments. This approach surfaced navigation dead-ends and cognitive load spikes early enough to fix in design, not dev.

Component consistency across 14 feature categories is hard to maintain manually. We ran AI-assisted design audits at each major milestone — checking for naming inconsistencies, spacing violations, and accessibility issues across the entire component library. For high-stakes UX decisions — the VDR permission model, the Agent Chain trigger UI, the plan-gating patterns — we ran cross-model review sessions, presenting design rationale to Claude and GPT-4 as critical stakeholders and surfacing objections before user testing.

The design system was seeded using AI: colour scales derived from LettsGroup’s brand DNA, typography ramps generated from legibility constraints and platform context, spacing tokens set from density principles calibrated to the data-heavy dashboard environment.

Solution

AI VentureFactory is a product that does something genuinely rare: it makes complexity feel calm.

The information architecture organises 14 feature categories into a coherent navigation model with a clear mental hierarchy — founders move between planning, execution, and operating layers without losing their place. The Agent Store presents 100+ tools across a browseable grid that rewards exploration without overwhelming. The Virtual Data Room feels like a serious document workspace, not a file upload drawer.

“The dashboard went from intimidating to navigable. Combining AI-Powered UX Research with agentic audit pipelines, first-session task completion improved significantly — founders reached their first AI-generated plan within minutes of landing.”

Agentic workflows are communicated through a design language developed specifically for this project: status indicators that distinguish “AI is thinking” from “AI has acted,” revision trails that show what changed and why, and trust-building patterns that let founders dial up or dial down AI autonomy without hunting through settings.

The plan-gating model — one of the most politically sensitive design problems on the platform — was resolved through contextual capability previews: rather than hard walls, users see what’s possible at the next tier in the moment they need it, reducing upgrade friction while surfacing value clearly.

Outcomes by the Numbers

~60%

Faster design-to-prototype cycle AI-native tooling

~40%

Reduction in first-session drop-off streamlined onboarding

2.4x

Task discoverability Agent Store navigation

~35%

Increase in plan upgrade conversion contextual capability previews

~50%

Fewer navigation errors

agentic workflow screens

~30%

Increase in VDR feature adoption within first week of use

3x

Design review coverage

agentic audit vs. manual review

~25%

Reduction in support-driven UX queries post-launch

Figures represent directional improvements observed or targeted across representative cohorts and design validation cycles. Individual results will vary.

Learning

This project confirmed something we suspected but hadn’t fully tested at this scale: designing for agentic AI is a fundamentally different discipline from designing for traditional software, and teams that treat it as just another product will build things that confuse and erode trust.

Founders aren’t afraid of AI doing work. They’re afraid of not understanding what it did. Every trust-building pattern we developed — the revision trails, the confidence indicators, the action previews — paid back in engagement metrics. Transparency isn’t a nice-to-have in agentic UX. It’s the product.

The AI-native design workflow wasn’t a shortcut. It was a different kind of depth. By compressing the exploratory and validation phases, we spent more time on the decisions that matter: the mental models, the trust architecture, the language of an AI that acts on your behalf.

My Contribution

I joined AI VentureFactory as the founding design partner, shaping the platform’s UX architecture from the ground up. I defined the information model, led the prompt-to-prototype pipeline, built the design system, and developed the agentic UX pattern library that underpins the platform’s AI interaction model.

Using a fully AI-augmented design stack — v0.dev for generative layout exploration, Figma AI for component iteration, Claude and GPT-4 for multi-model design review, and custom LLM agents for synthetic usability testing — I translated LettsGroup’s venture-building methodology into a product experience that founders can navigate under pressure.

The result is a platform that handles the full arc of startup building without losing the human judgment that venture creation actually demands.